Data Analysis and Manifold Learning (DAML)

Lecture series by Radu HORAUD,

INRIA Grenoble Rhone-Alpes

Winter-Spring 2011

Short course description (pdf)

Data Analysis and Manifold Learning (DAML) Lecture series by Radu HORAUD, INRIA Grenoble Rhone-Alpes Winter-Spring 2011 Short course description (pdf)

|

|

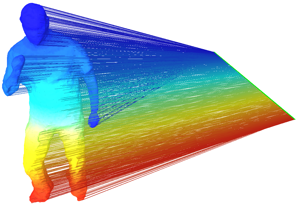

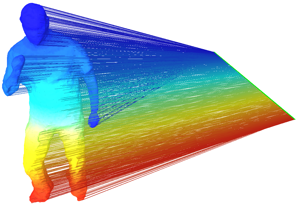

This is a 3D shape that is represented by an undirected weighted graph whose vertices correspond to 3D points and whose edges correspond to the local topology of the shape. Each graph vertex is projected onto the first non-null eigenvector of the graph's Laplacian matrix. This eigenvector may be viewed as the intrinsic principal direction of the shape, invariant to such shape deformations as articulated motion. |

Brief course description |

Time and date |

Location |

Material |

| Lecture #1: Introduction to spectral and graph-based methods | 14h-16h 7/1/2011 (Friday) | UFR-IMA Grenoble: F113 |

Horaud-DAML1.pdf |

| Lecture #2: Symmetric matrices and their properties | 14h-16h 14/1/2011 (Friday) | UFR-IMA Grenoble: F113 |

Horaud-DAML2.pdf |

| Lecture #3: Graphs, graph matrices, and spectral embeddings of graphs | 14h-16h 21/1/2011 (Friday) | UFR-IMA Grenoble: F107 |

Horaud-DAML3.pdf |

| Lecture #4: Gaussian mixtures and the EM algorithm | 14h-16h 4/2/2011 (Friday) | UFR-IMA Grenoble: F116 |

Horaud-DAML4.pdf |

| Lecture #5: Principal component analysis | 14h-16h 11/2/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML5.pdf |

| Lecture #6: Bayesian PCA and factor analysis | 14h-16h 18/2/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML6.pdf |

| Lecture #7: Laplacian embedding and spectral clustering | 14h-16h 25/2/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML7.pdf |

| Lecture #8: Introduction to kernel methods, kernel PCA | 14h-16h 18/3/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML8.pdf |

| Lecture #9: Diffusion kernels | 14h-16h 25/3/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML9.pdf |

| Lecture #10: Spectral matching | 14h-16h 8/4/2011 (Friday) | UFR-IMA Grenoble: F113 |

Horaud-DAML10.pdf |

| Lecture #11: Other methods: LLE and LTSA | 14h-16h 22/4/2011 (Friday) | UFR-IMA Grenoble: F113 |

Horaud-DAML11.pdf |

| Lecture #12: Manifold learning applications | 14h-16h 13/5/2011 (Friday) | UFR-IMA Grenoble: F114 |

Horaud-DAML12.pdf |

Brief overview of spectral and graph-based methods, such as principal component analysis (PCA), multi dimensional scaling (MDS), ISOMAP, LLE, Laplacian embedding, etc.

Further readings: L. Saul et al. Spectral Methods for Dimensionality Reduction. O. Chapelle, B. Schoelkopf, and A. Zien (eds.), Semisupervised Learning, pages 293-308. MIT Press: Cambridge, MA.

Eigenvalues and eigenvectors. Practical computation for dense ans sparse matrices. Covariance matrix. Gram matrix.

What is the matrix of a graph? Properties of graph matrices. Spectral graph theory. Undirected weighted graphs and Markov chains. Graph distances. Graph construction.

The univariate and multivariate Gaussian distributions. The Gaussian mixture model. The expectaction-maximization algorithm for Gaussian mixtures. The curse of dimensionality.

Formal derivation. Linear discriminant analysis.

EM algorithm for PPCA. Bayesian PCA and model selection. Factor analysis.

Spectral clustering. Semi-supervised spectral clustering. Links with other methods: Normalized cuts and random walks on graphs. Image and shape segmentation

Properties of kernels. Kernel PCA.

Heat diffusion on Riemannian manifolds. Heat diffusion on graphs. Diffusion embedding. Scale-space representation. Choosing the dimension of the embedded space.

Dense and sparse matching methods. Semi-supervised matching.

A list of (short) papers related to manifold learning:

Medical image analysis, brain imagery, auditory perception, data mining, Google's PageRank algorithm, etc.