Three Dimensional Sensors (3DS)

Lecture series by Radu HORAUD,

INRIA Grenoble Rhone-Alpes (in Montbonnot)

The objective of this course is to familiarize Master & PhD students, reseaerchers and engineers with these novel technologies as well as with the methods and algorithms needed to process the depth data. Additionnally, the course will address the issue of how to combine 3D sensors with high-definition color cameras in order to obtain very rich (3D + RGB) representations.

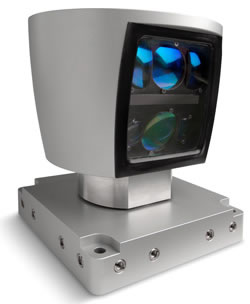

The emergence of three-dimensional sensors, e.g., Microsoft Kinect, Asus Xtion Pro Live (structered-light sensors), Mesa Imaging SR4000 (time-of-flight camera), or Velodyne HDL-64 laser range finder (LiDAR) have introduced a revolution in the way many research topics were traditionally addressed, namely using either stereoscopic systems based on color (2D) camera setups or complex scanners. These new sensors capture a depth image (a depth value at each pixel) at 10-30 frames per second. This opens a whole new and wide range of real-time applications in a variety of domains such as computer vision, robot perception, human-robot interaction, multimodal interfaces, computer graphics, computer entertainment, augmented reality, etc.